In the world of industrial automation and process control, an instrument tag is more than just a label on a data sheet or a physical plate wired to a transmitter. It is the “DNA” of the plant. A robust instrument tagging philosophy ensures that every sensor, valve, and controller can be uniquely identified, located, and maintained throughout its lifecycle.

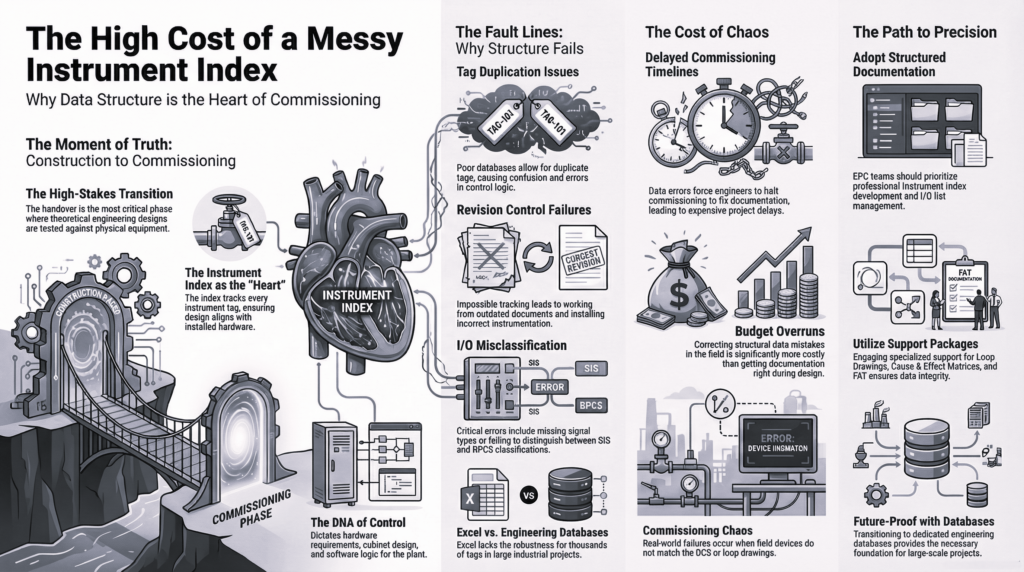

As a Senior Instrumentation Engineer, I have seen firsthand how a lack of standardization in the early stages of a project can lead to catastrophic delays during commissioning and maintenance nightmares during operations. To prevent this, we must build systems that are logical, consistent, and capable of providing support for large-scale projects.

The Foundation of Tag Naming: Why It Matters

Tag naming is the process of assigning a unique alphanumeric code to an instrument. This code communicates the instrument’s function, its location within the process, and its relationship to other components. Without a clear philosophy, you end up with “Frankenstein” systems where different areas of the same plant use different naming conventions.

A logical tagging system provides:

- Seamless Communication: Engineers, operators, and maintenance technicians all speak the same language.

- Efficient Data Management: Simplified integration with Asset Management Systems (AMS) and Computerized Maintenance Management Systems (CMMS).

- Faster Troubleshooting: When an alarm trips, the tag should immediately tell the operator what the device is and where it is located.

Leveraging Industrial Standards

To achieve true standardization, we don’t need to reinvent the wheel. Several industrial standards provide the framework for professional tagging.

ISA 5.1: The Global Benchmark

The International Society of Automation (ISA) 5.1 standard is the most widely used convention in the oil and gas and chemical industries. It uses a combination of letters (to define the measured variable and function) and numbers (to define the loop). For example, a “PT-101” is a Pressure Transmitter in loop 101.

KKS (Kraftwerk-Kennzeichensystem)

For power generation and complex heavy industries, the KKS system is often the gold standard. Unlike the flatter structure of ISA, KKS is a hierarchical system that identifies the plant level, the system level, and the component level. This hierarchy is essential for support for large-scale projects where thousands of identical components exist across different units.

Building a Scalable Philosophy

When designing a tagging philosophy for a new facility, scalability is the most critical factor. A system that works for a small pilot plant will often fail when applied to a multi-train refinery.

1. Hierarchical Structuring

A scalable tag should follow a “General to Specific” logic. A common structure includes:

- Unit/Area Code: (e.g., 10 for Crude Distillation)

- Equipment Type: (e.g., FV for Flow Valve)

- Loop Number: (e.g., 5001)

- Suffix: (e.g., A/B for redundant systems)

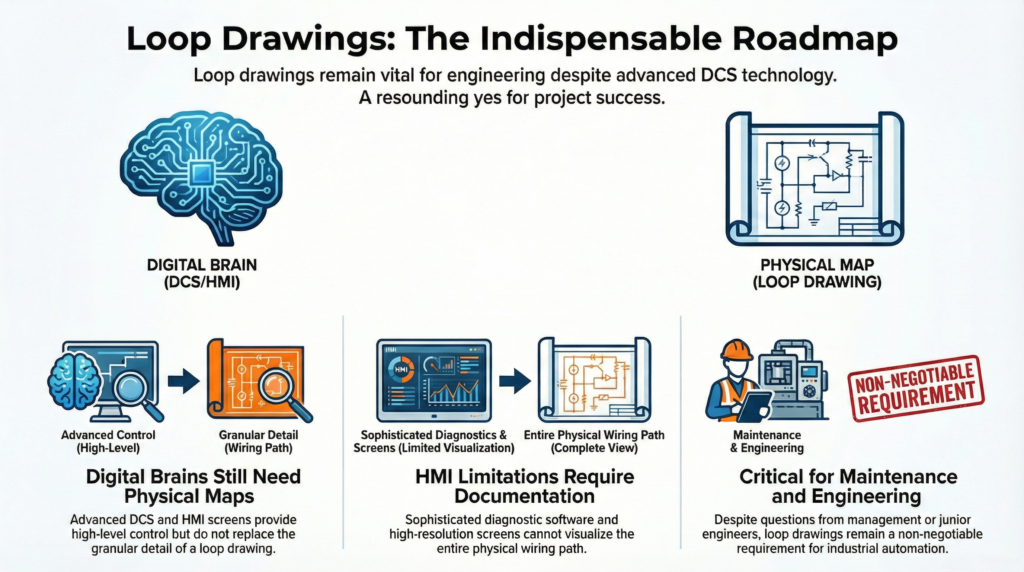

2. Consistency Across Documentation

The tag must be identical across the P&ID (Piping and Instrumentation Diagram), the Instrument Index, the wiring diagrams, and the HMI (Human Machine Interface). Any discrepancy—even a misplaced hyphen—can lead to procurement errors and safety risks.

3. Future-Proofing

Always leave “gaps” in your numbering sequences. If you number your loops 101, 102, and 103, you have no room to add a new instrument between them later. Using increments of 10 (100, 110, 120) allows for future expansion without breaking the logical flow.

Challenges in Large-Scale Projects

Providing support for large-scale projects requires a centralized “Tag Registry.” In projects involving multiple EPC (Engineering, Procurement, and Construction) contractors, a lack of a unified tagging philosophy leads to duplicate tags.

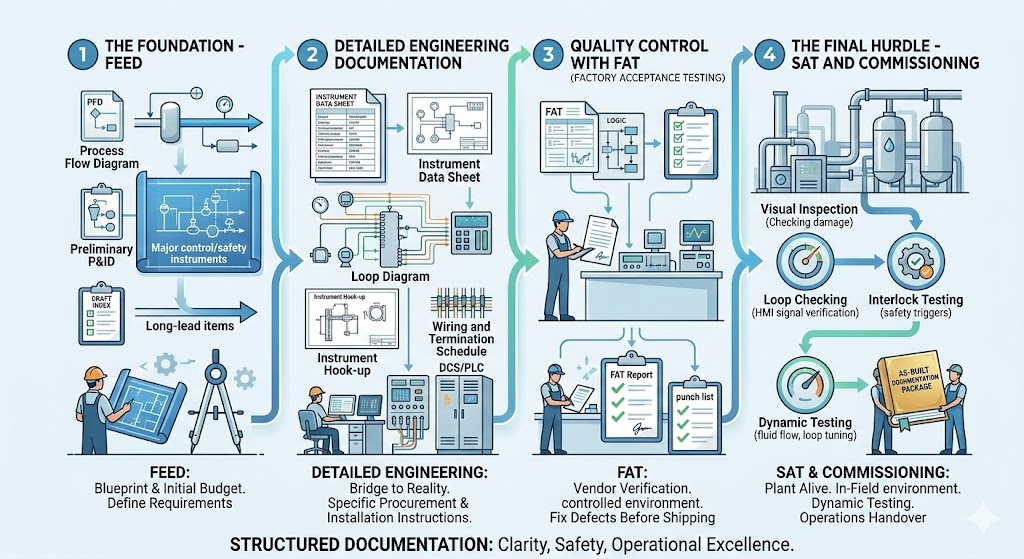

To mitigate this, the Lead Instrumentation Engineer must establish a Tagging Master Specification at the FEED (Front-End Engineering Design) stage. This document should dictate:

- Character length limits.

- Mandatory use of delimiters (dashes, underscores).

- Prohibited characters (to avoid software glitches in DCS/PLC systems).

Conclusion: The ROI of a Strong Philosophy

Developing a comprehensive instrument tagging philosophy requires an upfront investment of time and discipline. However, the return on investment is realized through reduced engineering hours, faster commissioning, and enhanced plant safety.

By adhering to industrial standards like ISA 5.1 or KKS and prioritizing standardization, you create a digital twin foundation that will serve the plant for decades. Remember: a tag is not just a name; it is a vital piece of information that keeps the industrial world turning.

Are you planning a new facility or upgrading an existing one? Ensure your tagging system is ready for the challenge. Contact our engineering team today to learn more about implementing scalable industrial standards.